During the era of Covid-19, social distancing has proven to be an efficient method of reducing the spread of contagious viruses. It is recommended that people avoid close contact as much as possible because of the potential for disease transmission. Many public spaces, including workplaces, banks, bus terminals, train stations, etc., struggle with the issue of keeping a safe distance.

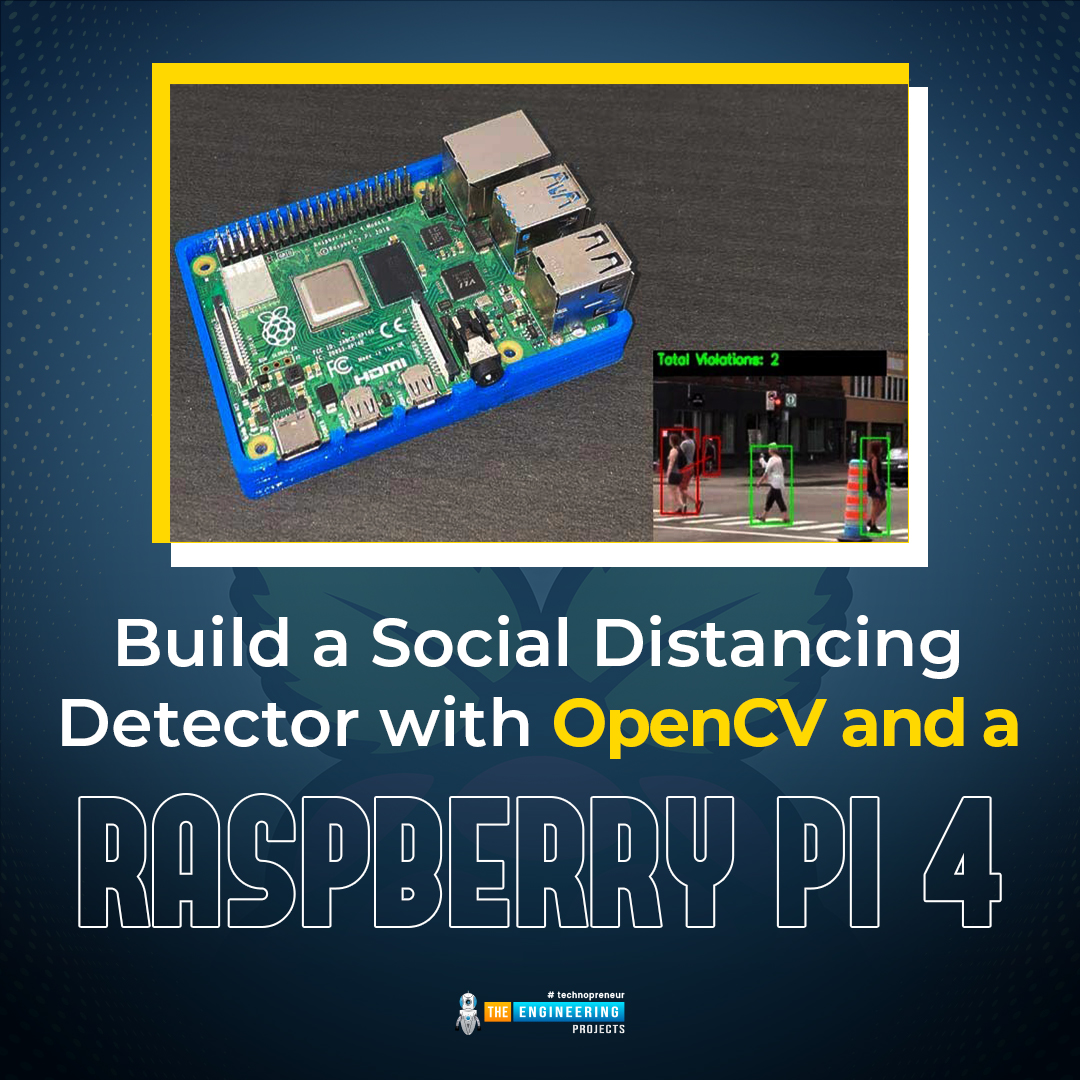

The previous guide covered the steps necessary to connect the PCF8591 ADC/DAC Analog Digital Converter Module to a Raspberry Pi 4. On our Terminal, we saw the results displayed as integers. We dug deeper into the topic, figuring out exactly how the ADC produces its output signals. In this article, however, we will use OpenCV and a Raspberry Pi to create a system that can detect when people are trying to avoid eye contact with one another. We will employ the YOLO version 3 Object Recognition Algorithm's weights to implement the Deep Neural Networks component. Compared to other controllers, the Raspberry Pi always comes out as the best option for image processing tasks. Previous efforts utilizing Raspberry Pi for advanced image processing included a face recognition application.

| Where To Buy? | ||||

|---|---|---|---|---|

| No. | Components | Distributor | Link To Buy | |

| 1 | Jumper Wires | Amazon | Buy Now | |

| 2 | PCF8591 | Amazon | Buy Now | |

| 3 | Raspberry Pi 4 | Amazon | Buy Now | |

Components

Raspberry Pi 4

Only a Raspberry pi 4 having OpenCV pre-installed will do for this purpose. Digital image processing is handled with OpenCV. Digital Image Processing is often used for people counting, facial identification, and detecting objects in images.

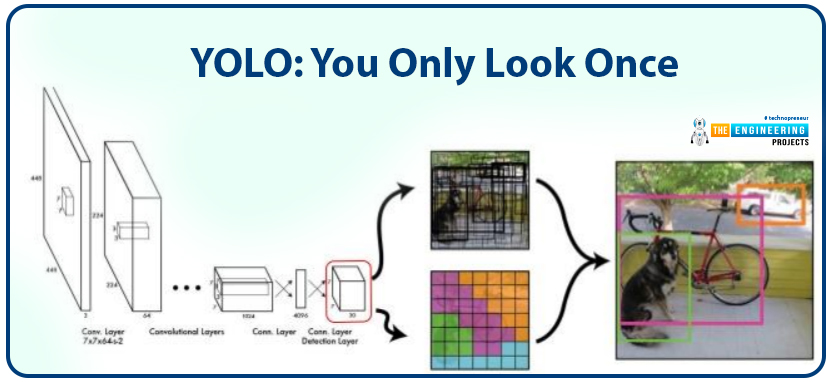

YOLO

The savvy YOLO (You Only Look Once) Convolution neural networks (CNN) in real-time Object Detection are invaluable. The most recent version of YOLO, YOLOv3, is a fast and accurate object identification algorithm that can identify eighty distinct types of objects in both still and moving media. The algorithm first runs a unique neural net over the entire image before breaking it up into areas and computing border boxes and probability for each. The YOLO base model has a 45 fps real-time frame rate for processing photos. Compared to other detection approaches, such as SSD and R-CNN, the YOLO model is superior.

In the past, computers relied on input devices like keyboards and mice; today, they can also analyze data from visual sources like photos and videos. Computer Vision is a computer's (or a machine's) capacity to read and interpret graphic data. Computing vision has advanced to the point that it can now evaluate the nature of people and objects and even read their emotions. This is feasible because of deep learning and artificial intelligence, which allow an algorithm to learn from examples like recognizing relevant features in an unlabeled image. The technology has matured to the point where it can be employed in critical infrastructure protection, hotel management, and online banking payment portals.

OpenCV is the most widely used computer vision library. It is a free and open-source Intel cross-platform library that may be used with any OS, including Windows, Mac OS X, and Linux. This will make it possible for OpenCV to function on a mobile device like a Pi, which will have a wide range of applications. Let's dive in, then.

Setup of OpenCV on a Raspberry Pi 4

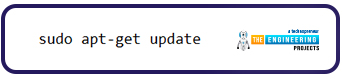

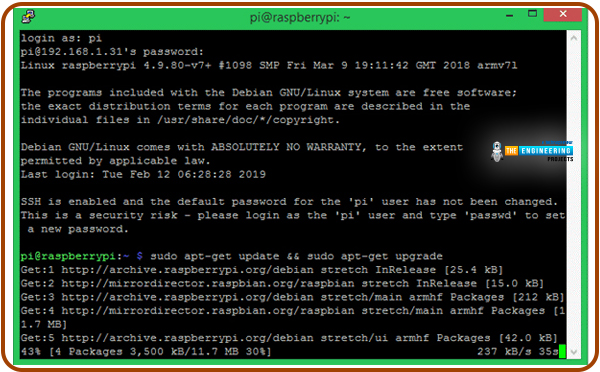

OpenCV and its prerequisites won't run without updating the Raspberry Pi to the latest version. To install the most recent software for your Raspberry Pi, type in the following commands:

sudo apt-get update

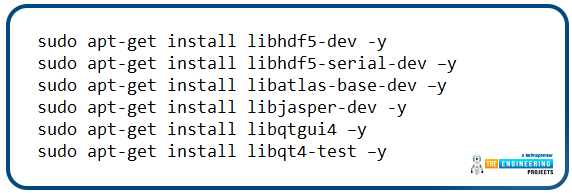

Then, use the scripts below to set up the prerequisites on your RPi so you can install OpenCV.

sudo apt-get install libhdf5-dev -y

sudo apt-get install libhdf5-serial-dev –y

sudo apt-get install libatlas-base-dev –y

sudo apt-get install libjasper-dev -y

sudo apt-get install libqtgui4 –y

sudo apt-get install libqt4-test –y

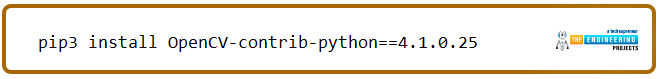

Finally, run the following lines to install OpenCV on your Raspberry Pi.

pip3 install OpenCV-contrib-python==4.1.0.25

Setting up Opencv using Cmake

OpenCV's installation on a Raspberry Pi can be nerve-wracking because it takes a long time, and there's a good possibility you'll make a mistake. Given my own experiences with this, I've tried to make this lesson as straightforward and helpful as possible so that you won't have to go through the same things I did. Even though OpenCV 4.0.1 had been out for three months when I started writing this lesson, I decided to use the older version (4.0.0) because of some issues with compiling the newer version.

This approach involves retrieving OpenCV's source package and compiling it on a Raspberry Pi with the help of CMake. Installing OpenCV in a virtual environment allows users to run many versions of Python and OpenCV on the same computer. But I'm not going to do that since I'd rather keep this essay brief and because I don't anticipate that it will be required any time soon.

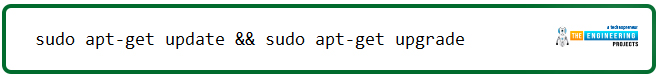

Step 1: Before we get started, let's ensure that our system is up to date by executing the command below:

sudo apt-get update && sudo apt-get upgrade

If there are updated packages, they should be downloaded and installed automatically. There is a 15-20 minute wait time for the process to complete.

Step 2: We must now update the apt-get package to download CMake.

sudo apt-get update

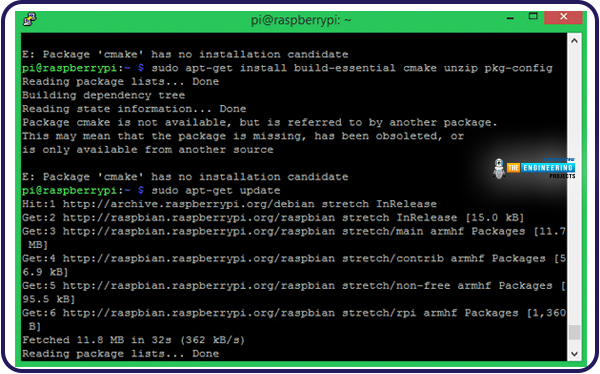

Step 3: When we've finished updating apt-get, we can use the following command to retrieve the CMake package and put it in place on our machine.

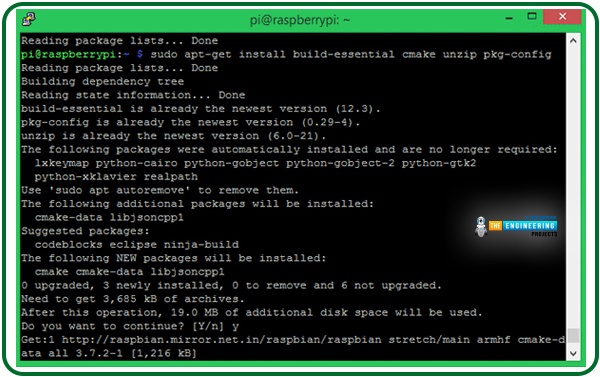

sudo apt-get install build-essential cmake unzip pkg-config

When installing CMake, your screen should look similar to the one below.

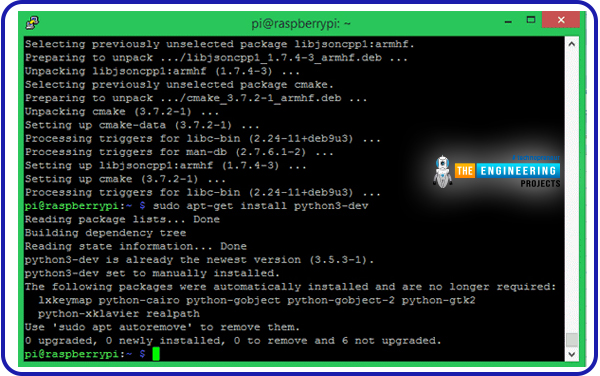

Step 4: Then, use the following command to set up Python 3's development headers:

sudo apt-get install python3-dev

Since it was pre-installed on mine, the screen looks like this.

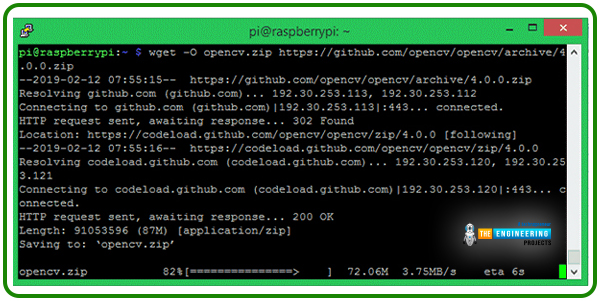

Step 5: The following action would be to obtain the OpenCV archive from GitHub. Here's the command you may use to replicate the effect:

wget -O opencv.zip https://github.com/opencv/opencv/archive/4.0.0.zip

You can see that we are collecting version 4.0.0 right now.

Step 6: The OpenCV contrib contains various python pre-built packages that will make our development efforts more efficient. Therefore, let's also download that with the command that is identical to the one shown below.

wget -O opencv_contrib.zip https://github.com/opencv/opencv_contrib/archive/4.0.0.zip

The "OpenCV-4.0.0" and "OpenCV-contrib-4.0.0" zip files should now be in your home directory. If you need to know for sure, you may always go ahead and check it out.

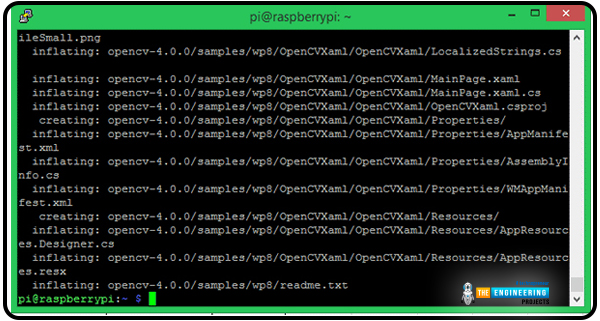

Step 7: Let's extract OpenCV-4.0.0 from its.zip archive with the following command.

unzip opencv.zip

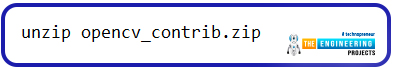

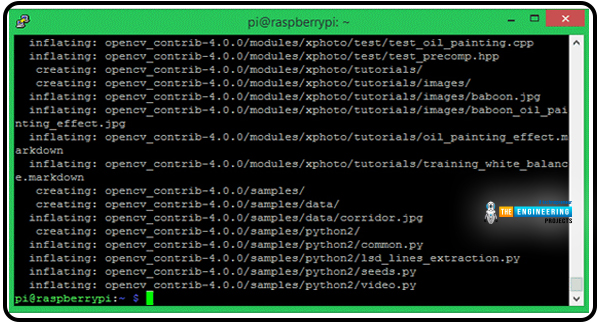

Step 8: Extraction of OpenCV contrib-4.0.0 via the command line is identical.

unzip opencv_contrib.zip

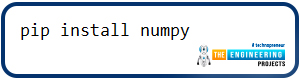

Step 9: OpenCV cannot function without NumPy. Follow the command below to begin the installation.

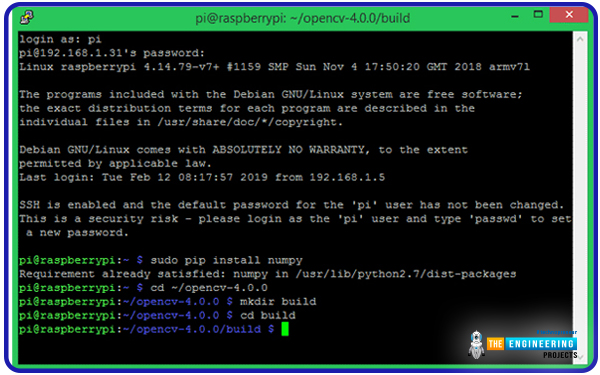

pip install numpy

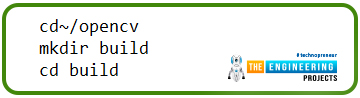

Step 10: In our new setup, the home directory would now contain two folders: OpenCV-4.0.0 and OpenCV contrib-4.0.0. Next, we'll make a new directory inside OpenCV-4.0.0 named "build" to perform the actual compilation of the Opencv library. The steps needed to achieve the same result are detailed below.

cd~/opencv

mkdir build

cd build

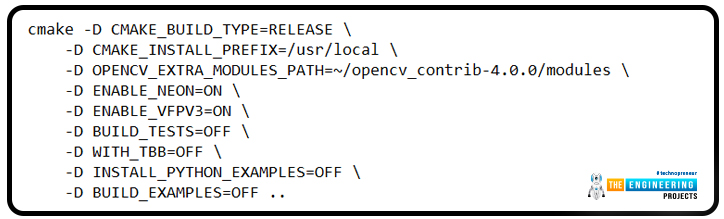

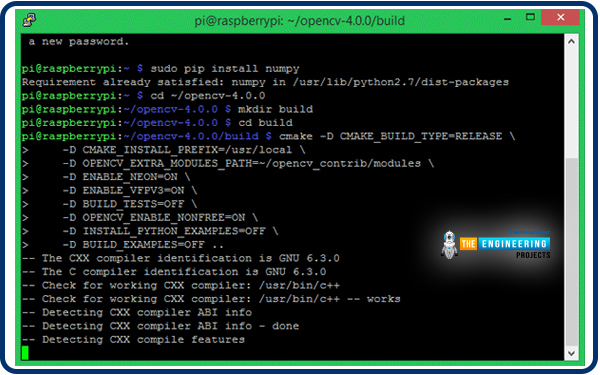

Step 11: OpenCV's CMake process must now be initiated. In this section, we specify the requirements for compiling OpenCV. Verify that "/OpenCV-4.0.0/build" is in your path. Then, paste the lines below into the Terminal.

cmake -D CMAKE_BUILD_TYPE=RELEASE \

-D CMAKE_INSTALL_PREFIX=/usr/local \

-D OPENCV_EXTRA_MODULES_PATH=~/opencv_contrib-4.0.0/modules \

-D ENABLE_NEON=ON \

-D ENABLE_VFPV3=ON \

-D BUILD_TESTS=OFF \

-D WITH_TBB=OFF \

-D INSTALL_PYTHON_EXAMPLES=OFF \

-D BUILD_EXAMPLES=OFF ..

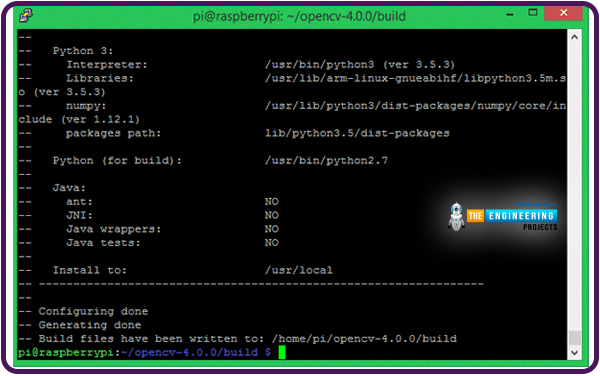

Hopefully, the configuration will proceed without a hitch, and you'll see "Configuring done" and "Generating done" in the output.

If you encounter an issue during this procedure, check to see if the correct path was entered and if the "OpenCV-4.0.0" and "OpenCV contrib-4.0.0" directories exist in the root directory path.

Step 12: This is the most comprehensive process that needs to be completed. Using the following command, you can compile OpenCV, but only if you are in the "/OpenCV-4.0.0/build" directory.

Make –j4

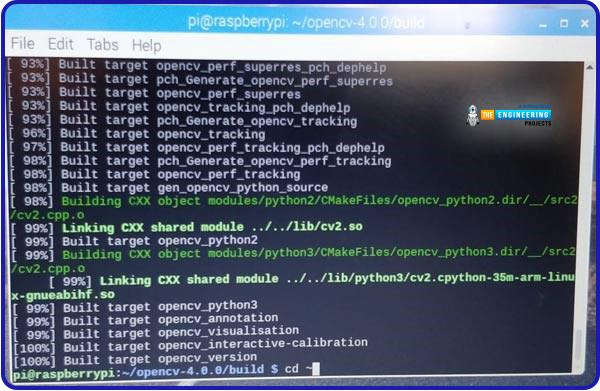

Using this method, you may initiate the OpenCV compilation process and view the status in percentage terms as it unfolds. After three to four hours, you will see a completed build screen.

The command "make -j4" utilizes all four processor cores when compiling OpenCV. Some people may feel impatient waiting for a 99% success rate, but eventually, it will be worth it.

After waiting an hour, I had to cancel the process and rebuild it with "make -j1," which did the trick. It is advisable first to use make j4 since that will utilize all four of pi's cores, and then use make j1, as make j4 will complete most of the compilation.

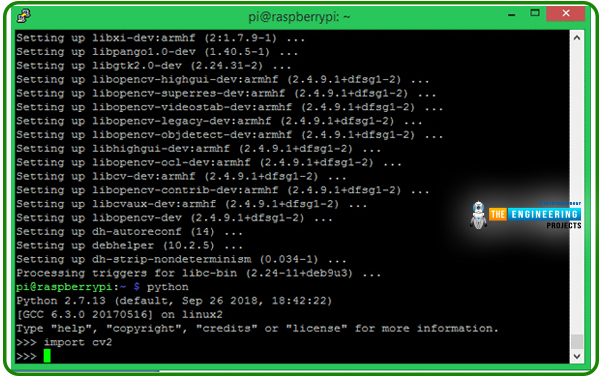

Step 13: If you are at this point, congratulations. You have made it through the entire procedure with flying colors. The final action is to run the following command to install libopecv.

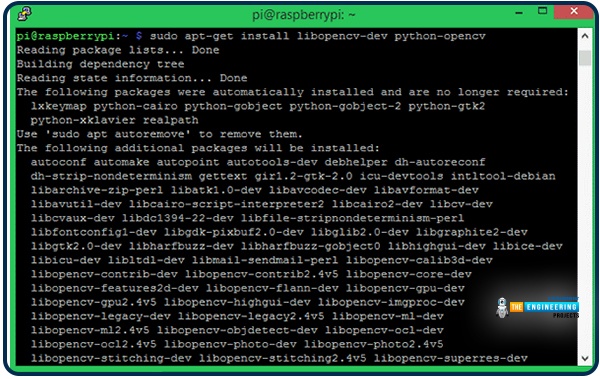

sudo apt-get install libopencv-dev python-OpenCV

Step 14: Finally, a little python script can be run to verify that the library was successfully installed. Try "import cv2" in Python, as demonstrated below. You shouldn't get any error message when you do this.

Completing the Raspberry Pi Software Installation of Necessary Additional Packages

Let's get the necessary packages set up on the Raspberry Pi before we begin writing the code for the social distance detector.

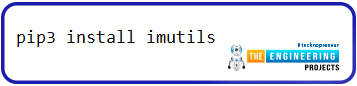

Installing imutils

utils are designed to simplify the use of OpenCV for standard image processing tasks like translating, rotating, resizing, skeletonizing, and presenting pictures via Matplotlib. If you want to get the imutils, type in the following command:

pip3 install imutils

A Breakdown of the Program

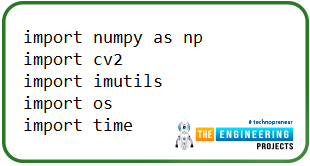

The complete code may be found at the bottom of the page. In this section, we'll walk you through the most crucial parts of the code so you can understand it better. All the necessary libraries for this project should be imported at the beginning of the code.

import numpy as np

import cv2

import imutils

import os

import time

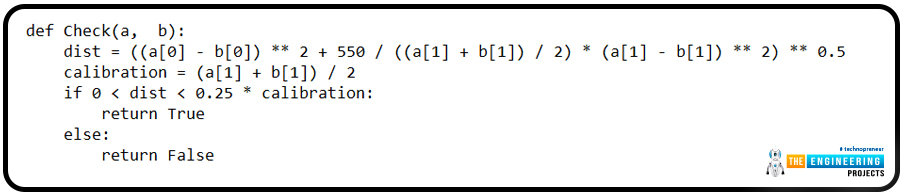

Distances between objects or points in a video frame can be determined with the Check() function. The two things in the picture are represented by the a and b points. The Euclidean distance is determined using these two positions as the starting and ending points.

def Check(a, b):

dist = ((a[0] - b[0]) ** 2 + 550 / ((a[1] + b[1]) / 2) * (a[1] - b[1]) ** 2) ** 0.5

calibration = (a[1] + b[1]) / 2

if 0 < dist < 0.25 * calibration:

return True

else:

return False

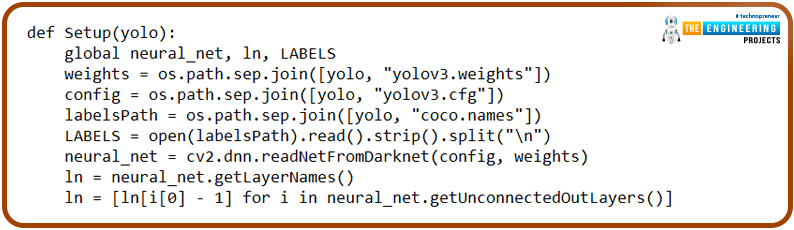

The YOLO weights, configuration file, and COCO names file all have specific locations that can be set in the setup function. The os.path module is everything you need to do ordinary things with pathnames. The os.path.join() sub-module intelligently combines two or more path components. cv2.dnn.read The net is reloaded with the saved weights using the netfromdarknet() function. Once the weights have been loaded, the network layers can be extracted using the getLayerNames model.

def Setup(yolo):

global neural_net, ln, LABELS

weights = os.path.sep.join([yolo, "yolov3.weights"])

config = os.path.sep.join([yolo, "yolov3.cfg"])

labelsPath = os.path.sep.join([yolo, "coco.names"])

LABELS = open(labelsPath).read().strip().split("\n")

neural_net = cv2.dnn.readNetFromDarknet(config, weights)

ln = neural_net.getLayerNames()

ln = [ln[i[0] - 1] for i in neural_net.getUnconnectedOutLayers()]

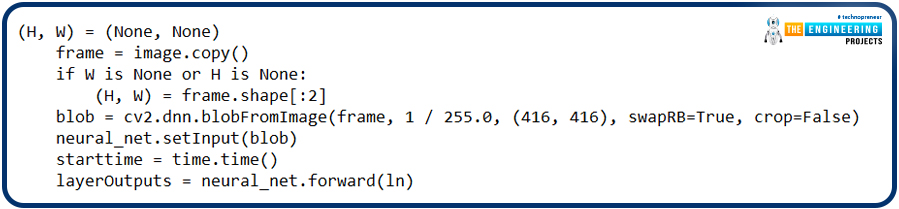

In the image processing section, we extract a still image from the video and analyze it to find the distance between the people in the crowd. The function's first two lines specify an empty string for both the width and height of the video frame. To process many images simultaneously, we utilized the cv2.dnn.blobFromImage() method in the following line. The blob function adjusts a frame's Mean, Scale, and Channel.

(H, W) = (None, None)

frame = image.copy()

if W is None or H is None:

(H, W) = frame.shape[:2]

blob = cv2.dnn.blobFromImage(frame, 1 / 255.0, (416, 416), swapRB=True, crop=False)

neural_net.setInput(blob)

starttime = time.time()

layerOutputs = neural_net.forward(ln)

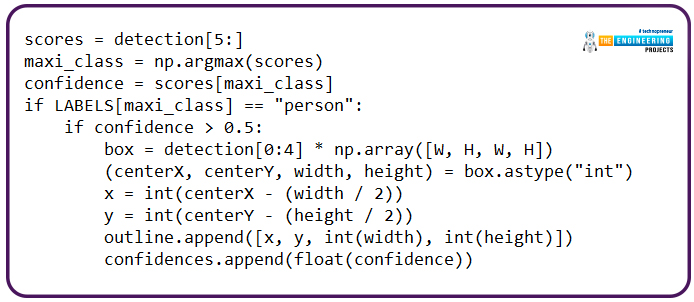

YOLO's layer outputs are numerical values. With these numbers, we may determine which objects belong to which classes with greater precision. To identify persons, we iterate over all layerOutputs and assign the "person" class label to each. Each detection generates a bounding box whose output includes the coordinates of the detection's center on X and Y as well as its width and height.

scores = detection[5:]

maxi_class = np.argmax(scores)

confidence = scores[maxi_class]

if LABELS[maxi_class] == "person":

if confidence > 0.5:

box = detection[0:4] * np.array([W, H, W, H])

(centerX, centerY, width, height) = box.astype("int")

x = int(centerX - (width / 2))

y = int(centerY - (height / 2))

outline.append([x, y, int(width), int(height)])

confidences.append(float(confidence))

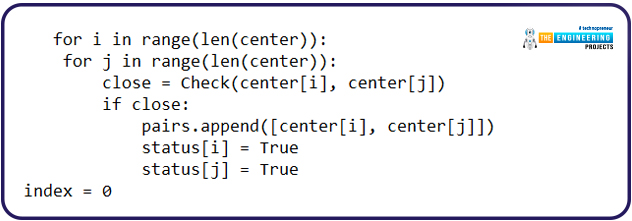

Then, determine how far apart the middle of the active box is from the centers of all other boxes. If the rectangles overlap only a little, set the value to "true."

for i in range(len(center)):

for j in range(len(center)):

close = Check(center[i], center[j])

if close:

pairs.append([center[i], center[j]])

status[i] = True

status[j] = True

index = 0

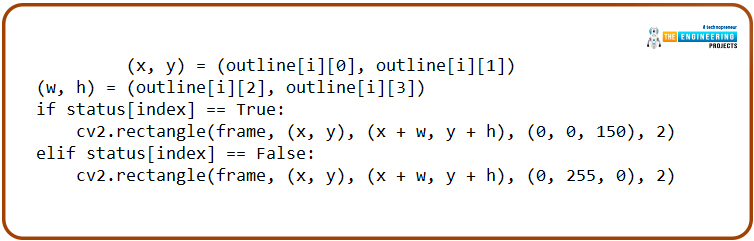

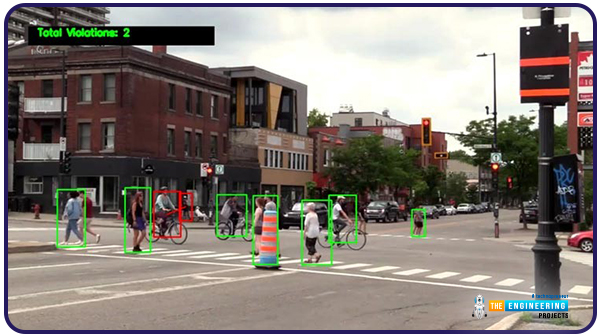

In the following lines, we'll use the model's box dimensions to create a square around the individual and evaluate whether or not they are in a secure area. If there is little space between the boxes, the box's color will be red; otherwise, it will be green.

(x, y) = (outline[i][0], outline[i][1])

(w, h) = (outline[i][2], outline[i][3])

if status[index] == True:

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 0, 150), 2)

elif status[index] == False:

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

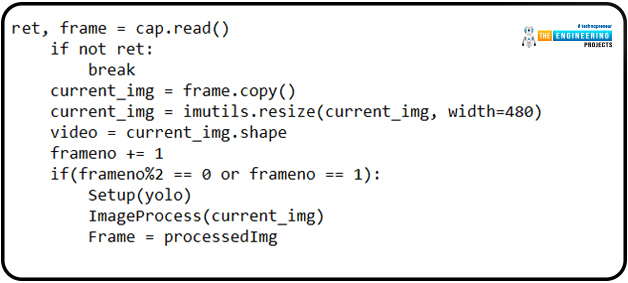

Now we're inside the iteration function, where we're reading each film frame and analyzing it to determine how far apart the people are.

ret, frame = cap.read()

if not ret:

break

current_img = frame.copy()

current_img = imutils.resize(current_img, width=480)

video = current_img.shape

frameno += 1

if(frameno%2 == 0 or frameno == 1):

Setup(yolo)

ImageProcess(current_img)

Frame = processedImg

In the following lines, we'll utilize the opname-defined cv2.VideoWriter() function to save the output video to the provided place.

if create is None:

fourcc = cv2.VideoWriter_fourcc(*'XVID')

create = cv2.VideoWriter(opname, fourcc, 30, (Frame.shape[1], Frame.shape[0]), True)

create.write(Frame)

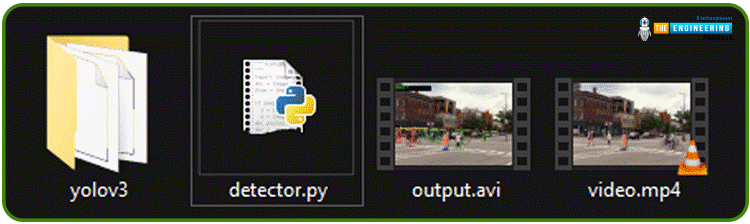

Testing the program

When satisfied with your code, launch a terminal on your Pi and go to the directory where you kept it. The following folder structure is recommended for storing the code, Yolo framework, and demonstration video.

The yoloV3 directory is downloadable from the;

https://pjreddie.com/media/files/yolov3.weights

videos from:

https://www.pexels.com/search/videos/pedestrians/

Finally, paste the Python scripts provided below into the same folder as the one displayed above. The following command must be run once you've entered the project directory:

python3 detector.py

I applied this code to a sample video I found on pexels, and the results were interesting. The frame rate was terrible, and the film played back in almost 11 minutes.

Changing line 98 from cv2.VideoCapture(input) to cv2.VideoCapture(0) allows you to test the code without needing a video. Follow these steps to utilize OpenCV on a Raspberry Pi to identify inappropriate social distances.

Complete code

import numpy as np

import cv2

import imutils

import os

import time

def Check(a, b):

dist = ((a[0] - b[0]) ** 2 + 550 / ((a[1] + b[1]) / 2) * (a[1] - b[1]) ** 2) ** 0.5

calibration = (a[1] + b[1]) / 2

if 0 < dist < 0.25 * calibration:

return True

else:

return False

def Setup(yolo):

global net, ln, LABELS

weights = os.path.sep.join([yolo, "yolov3.weights"])

config = os.path.sep.join([yolo, "yolov3.cfg"])

labelsPath = os.path.sep.join([yolo, "coco.names"])

LABELS = open(labelsPath).read().strip().split("\n")

net = cv2.dnn.readNetFromDarknet(config, weights)

ln = net.getLayerNames()

ln = [ln[i[0] - 1] for i in net.getUnconnectedOutLayers()]

def ImageProcess(image):

global processedImg

(H, W) = (None, None)

frame = image.copy()

if W is None or H is None:

(H, W) = frame.shape[:2]

blob = cv2.dnn.blobFromImage(frame, 1 / 255.0, (416, 416), swapRB=True, crop=False)

net.setInput(blob)

starttime = time.time()

layerOutputs = net.forward(ln)

stoptime = time.time()

print("Video is Getting Processed at {:.4f} seconds per frame".format((stoptime-starttime)))

confidences = []

outline = []

for output in layerOutputs:

for detection in output:

scores = detection[5:]

maxi_class = np.argmax(scores)

confidence = scores[maxi_class]

if LABELS[maxi_class] == "person":

if confidence > 0.5:

box = detection[0:4] * np.array([W, H, W, H])

(centerX, centerY, width, height) = box.astype("int")

x = int(centerX - (width / 2))

y = int(centerY - (height / 2))

outline.append([x, y, int(width), int(height)])

confidences.append(float(confidence))

box_line = cv2.dnn.NMSBoxes(outline, confidences, 0.5, 0.3)

if len(box_line) > 0:

flat_box = box_line.flatten()

pairs = []

center = []

status = []

for i in flat_box:

(x, y) = (outline[i][0], outline[i][1])

(w, h) = (outline[i][2], outline[i][3])

center.append([int(x + w / 2), int(y + h / 2)])

status.append(False)

for i in range(len(center)):

for j in range(len(center)):

close = Check(center[i], center[j])

if close:

pairs.append([center[i], center[j]])

status[i] = True

status[j] = True

index = 0

for i in flat_box:

(x, y) = (outline[i][0], outline[i][1])

(w, h) = (outline[i][2], outline[i][3])

if status[index] == True:

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 0, 150), 2)

elif status[index] == False:

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 0), 2)

index += 1

for h in pairs:

cv2.line(frame, tuple(h[0]), tuple(h[1]), (0, 0, 255), 2)

processedImg = frame.copy()

create = None

frameno = 0

filename = "newVideo.mp4"

yolo = "yolov3/"

opname = "output2.avi"

cap = cv2.VideoCapture(filename)

time1 = time.time()

while(True):

ret, frame = cap.read()

if not ret:

break

current_img = frame.copy()

current_img = imutils.resize(current_img, width=480)

video = current_img.shape

frameno += 1

if(frameno%2 == 0 or frameno == 1):

Setup(yolo)

ImageProcess(current_img)

Frame = processedImg

cv2.imshow("Image", Frame)

if create is None:

fourcc = cv2.VideoWriter_fourcc(*'XVID')

create = cv2.VideoWriter(opname, fourcc, 30, (Frame.shape[1], Frame.shape[0]), True)

create.write(Frame)

if cv2.waitKey(1) & 0xFF == ord('s'):

break

time2 = time.time()

print("Completed. Total Time Taken: {} minutes".format((time2-time1)/60))

cap.release()

cv2.destroyAllWindows()

Why is Social Distancing Detection helpful?

Convincing Workers

Since 41% of workers won't return to their desks until they feel comfortable, installing social distancing detection is an excellent approach to reassure them that the situation has been rectified. People without fevers can still be contagious; hence this solution is preferable to thermal imaging cameras.

Space Utilization

Using the detection program, you can find out which places in the workplace are the most popular. As a result, you'll have all the information you need to implement the best precautions.

The Practice of Keeping Tabs and Taking Measures

The software can also be connected to security video systems outside the workplace, such as in a factory where workers are frequently close to one another. To be able to keep an eye on the office atmosphere and single out those whose personal space is too close to others.

Tracking the Queues

Queue monitoring is a valuable addition to security cameras for businesses in retail, healthcare, and other sectors, where waiting in line is unnecessary. As a result, the cameras will be able to monitor and recognize whether or not people are following the social distance requirements. The system can be configured to function with automatic barricades and digital billboards to provide real-time alerts and health and security information.

Consequences of Isolation

The adverse effects of social isolation include the following:

Its efficacy decreases when mosquitoes, infected food or water, or other vectors are predominantly responsible for spreading disease.

If a person isn't used to being in a social setting, they may become lonely and depressed.

Productivity drops, and other benefits of interacting with other people are lost.

Conclusion

This tutorial showed us how to build a social distance detection system. This technology makes use of AI and deep learning to analyze visual data. Incorporating computer vision allows for accurate distance calculations between people. A red box will appear around any group that violates the minimum acceptable threshold value. The system's designers used previously shot footage of a busy roadway to build their algorithm. The system can determine an approximation of the distance between individuals. In social interactions, there are two types of space between people: the "Safe" and "Unsafe" distances. In addition, it shows labels according to item detection and classification. The classifier may be utilized to create real-time applications and put into practice live video streams. During pandemics, this technology can be combined with CCTV to keep an eye on the public. Since it is practical to conduct such screening of the mass population, they are routinely implemented in high-traffic areas such as airports, bus terminals, markets, streets, shopping mall entrances, campuses, and even workplaces and restaurants. Keeping an eye on the distance between two people allows us to ensure sufficient space is maintained between them.